推荐的采集方式为服务将应用日志写到本地,通过filebeat采集到kafka,并由监控引擎的logstash处理后,写入到elasticsearch。

应用日志对接的目标是将应用日志采集后,按指定规则解析成目标日志字段,存储到elasticsearch供关联与查询。

日志采集已实际采集粒度为准,以下示例一个项目所有服务对应一个kafka主题,一个服务对应一个ES索引。

日志格式要求

目前部署好的系统,应用日志和指标数据用的是同一个elasticsearch。

如想使用不同的es,需要修改monitor配置:

| spring: |

|---|

| elasticsearch: |

| host: elasticsearch |

| port: 9300 |

| clustername: docker-cluster |

| mapper: classpath:/esmapper// .xml,classpath:esmapper/*.xml |

| rest: |

| uris: http://elasticsearch:9200 |

| loguris: http://elasticsearch:9200 (日志es的配置) |

目前暂用同一个es

应用日志处理好格式后写到elasticsearch中,应用日志格式要求如下:

Index名称:

服务名+‘-’+日期, (如:easeservice-mgmt-control-2021.05.17)

字段:

| 名称 | 说明 |

|---|---|

| log | 日志内容(原始内容) |

| stream | 输入类型 |

| logLevel | 日志级别 |

| service | 服务名 |

| traceId | 链路ID |

| Id | 链路spanId |

| pid | 进程号 |

| threadId | 线程名称 |

| content | 实际内容 |

| message | 同log |

| @timestamp | 日志时间 |

| tag | 标签 |

示例:

| 日志原始内容为: |

|---|

| 2021-05-16 09:16:47.572DEBUG [easeservice-mgmt-control,a07018a22ba3c46c,a07018a22ba3c46c,true] 9 --- [io-38013-exec-8] c.m.e.a.security.AuthorizationFilter : receive header[Authorization=Bearer 1698db1d2cb5426dac57e74e6a2d04c3]\n |

| 经解析成json后为: |

| { |

| "log" : "2021-05-16 09:16:47.572 DEBUG [easeservice-mgmt-control,a07018a22ba3c46c,a07018a22ba3c46c,true] 9 --- [io-38013-exec-8] c.m.e.a.security.AuthorizationFilter : receive header[Authorization=Bearer 1698db1d2cb5426dac57e74e6a2d04c3]\n", |

| "stream" : "stdout", |

| "logLevel" : "DEBUG", |

| "service" : "easeservice-mgmt-control", |

| "traceId" : "a07018a22ba3c46c", |

| "id" : "a07018a22ba3c46c", |

| "pid" : "9", |

| "threadId" : "io-38013-exec-8", |

| "content" : "c.m.e.a.security.AuthorizationFilter : receive header[Authorization=Bearer 1698db1d2cb5426dac57e74e6a2d04c3]", |

| "message" : "2021-05-16 09:16:47.572 DEBUG [easeservice-mgmt-control,a07018a22ba3c46c,a07018a22ba3c46c,true] 9 --- [io-38013-exec-8] c.m.e.a.security.AuthorizationFilter : receive header[Authorization=Bearer 1698db1d2cb5426dac57e74e6a2d04c3]\n", |

| "@timestamp" : "2021-05-17T17:19:32.031877669+08:00", |

| "tag" : "es-control.var.log.containers.easeservice-mgmt-control-675c86b489-s655l_default_ea seservice-mgmt-control-5418c4833362f8c3a8744c41513bbc7fff2174d08741f0e3dbc2 b8db4ec175a6.log" |

| } |

| 除log外,其他字段是解析后生成的,service和@timestamp不能为空,其他字段没有就留空。 |

| es需要应用templates定义每个字段的类型: |

| PUT _template/easeservice-template |

| { |

| "order" : 1, |

| "version" : 100, |

| "index_patterns" : [ //这部分需要根据index名设置 |

| "easeservice-*", |

| "easegateway-*", |

| "easestash-*", |

| "ease-*", |

| "mesh-*" |

| ], |

| "settings" : { |

| "index" : { |

| "analysis" : { |

| "normalizer" : { |

| "lowercase_normalizer" : { |

| "filter" : [ |

| "lowercase" |

| ], |

| "type" : "custom" |

| } |

| } |

| }, |

| # ES若没有配置lifecycle去掉该项 |

| "lifecycle" : { |

| "name" : "easeservice-lifecycle-policy" |

| } |

| } |

| }, |

| "mappings" : { |

| "dynamic" : true, |

| "properties" : { |

| "threadId" : { |

| "normalizer" : "lowercase_normalizer", |

| "index" : true, |

| "type" : "keyword" |

| }, |

| "traceId" : { |

| "normalizer" : "lowercase_normalizer", |

| "index" : true, |

| "type" : "keyword" |

| }, |

| "logLevel" : { |

| "normalizer" : "lowercase_normalizer", |

| "index" : true, |

| "type" : "keyword" |

| }, |

| "service" : { |

| "normalizer" : "lowercase_normalizer", |

| "index" : true, |

| "type" : "keyword" |

| }, |

| "pid" : { |

| "type" : "keyword" |

| }, |

| "id" : { |

| "normalizer" : "lowercase_normalizer", |

| "index" : true, |

| "type" : "keyword" |

| } |

| } |

| }, |

| "aliases" : { } |

| } |

安装filebeat

- 上传filebeat安装包,并解压

| tar xvf filebeat-xxx.tar.gz |

|---|

- 修改filebeat配置

| #配置filebeat.yml |

|---|

| output.kafka: |

| enabled: true |

| #hosts: ["kafka-ip1:port1", kafka-ip2:port2"," kafka-ip3:port3"] #输出到kafka集群模式 |

| hosts: ["10.37.1.75:19092"] #单点模式 |

| topic:servers_topic #topic名称 |

| filebeat.inputs: |

| - input_type: log |

| paths: |

| -/path/to/server1/log/*.log #采集server1下所有.log日志 |

| #该行日志与下一次匹配中间的多行日志合并成一条输出 |

| multiline.pattern: '^[[0-9]{4}-[0-9]{2}-[0-9]{2} |

| multiline.negate: true |

| multiline.match: after |

| fields: |

| service: server1-service #服务1索引名称 |

| - input_type: log |

| paths: |

| -/path/to/server2/log/*.log #采集server2下所有.log日志 |

| multiline.pattern: '^[[0-9]{4}-[0-9]{2}-[0-9]{2} |

| multiline.negate: true |

| multiline.match: after |

| fields: |

| service: server2-service #服务2索引名称 |

| #{根据实际情况添加需要采集的日志文件} |

| processors: |

| - drop_fields: |

| fields: ["log","agent","ecs","@metadata"] |

| ignore_missing: true |

- 后台启动filebeat

| nohup ./filebeat -e -c filebeat.yml & |

|---|

安装logstash

- 上传logstash安装包并解压

| tar xvf logstash-xxx.tar.gz |

|---|

- 修改配置

| #修改配置文件 |

|---|

| pipeline.batch.size: 10000 |

| pipeline.batch.delay: 5 |

| path.data:/app/logcenter/logstash_data #指定目录并赋予权限 |

| pipeline.workers: 16 |

| pipeline.batch.delay: 100 |

| config.reload.automatic: true |

| config.reload.interval: 10s |

- 根据需求配置集成文件logstash-demo.conf;可以配置不同的集成文件

| #配置 |

|---|

| input{ |

| kafka { |

| bootstrap_servers => ["10.145.210.225:9092"] #kafka地址,集群逗号分隔。 |

| auto_offset_reset =>"latest" |

| enable_auto_commit => true |

| topics => ["servers_topic "] #topic名称 |

| group_id =>"servers_topic_group" |

| consumer_threads => 12 |

| codec => json |

| } |

| } |

| filter { |

| mutate { |

| copy => { "message" => "log" } |

| } |

| mutate { |

| copy => { "[fields][service]" => "service" } |

| } |

| } |

| output{ |

| elasticsearch { |

| hosts => ["es1:port", es2:port "," es3:port "] #ES集群地址 |

| index => "%{[fields][service]}-%{+YYYY.MM.dd}" |

| } |

| } |

| } |

| } |

- 后台启动logstash

| nohup ./bin/logstash -f config/logstash-demo.conf --path.data=./logstash_data > /dev/null 2>&1 & |

|---|

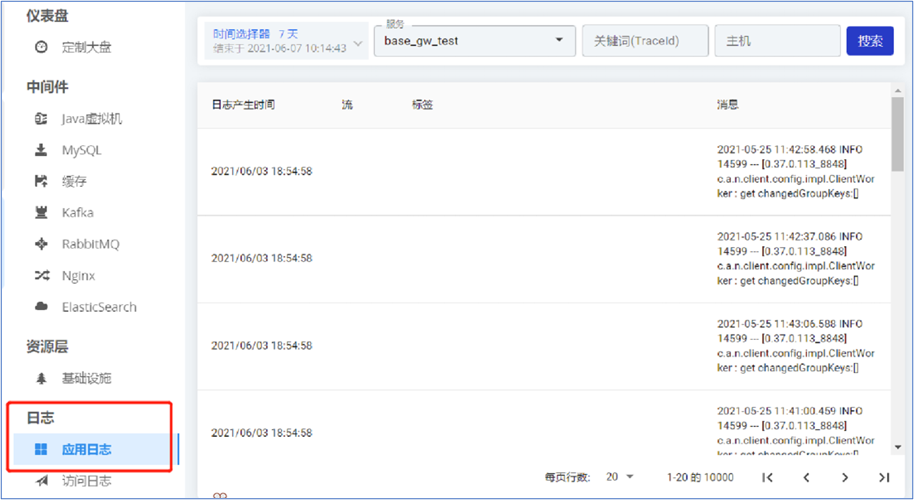

查看验证

监控-日志-应用日志,选择查看时间(默认30分钟),选择查看服务,可以根据关键字搜索。